← Back to Projects

TurtleBot SLAM (with RTAB-Map, Hand-Gestures, Face Recognition & AR Code Tracking)

turtlebothand gesture recognitionface recognitionfisherfacesOpenCvrobotics

Goal

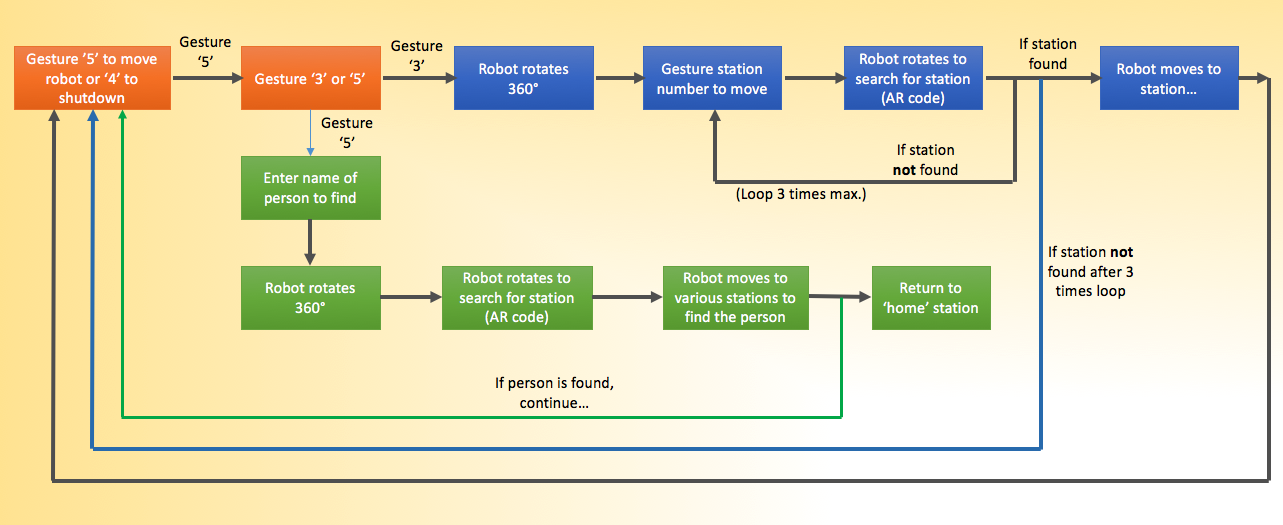

Navigation of TurtleBot using hand-gestures to find target locations marked with AR codes and/or to find a specific person using face-recognition.

Overview

This project consists of the following 4 tasks:

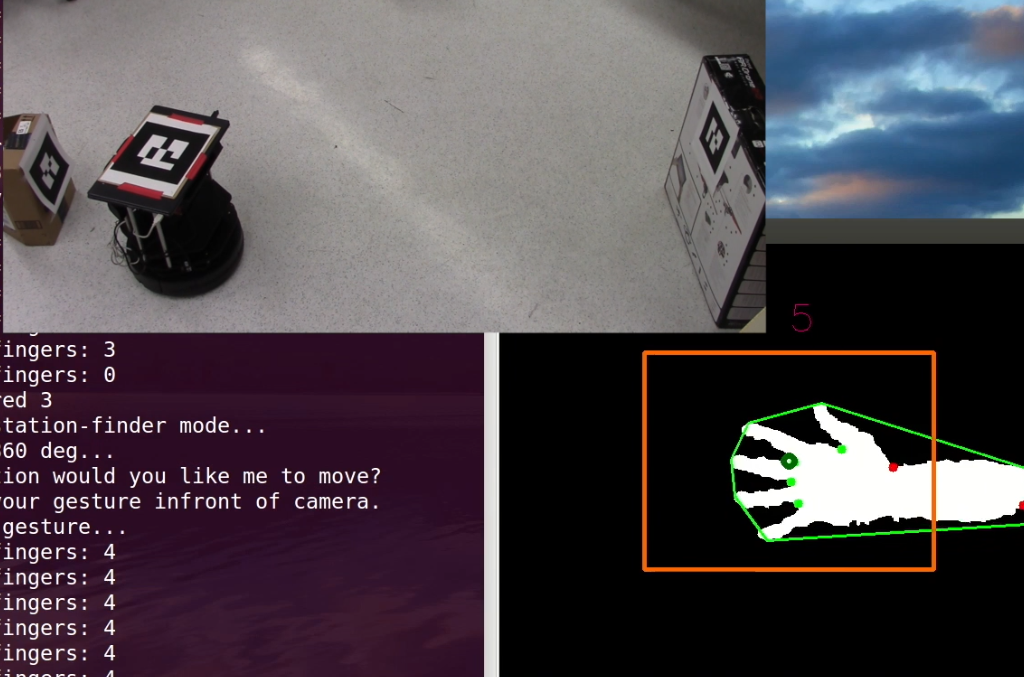

- Determine number of fingers (gestured) in front of an ASUS Xtion Pro camera.

- Navigate TurtleBot in an unknown environment using RGB-D SLAM approach, concurrently building a 3D map of the environment; the robot first finds a target station marked with AR code matching the number detected in (1) and then moves towards the target station.

- Capture, train and recognize faces of people in real-time using a simple GUI.

- Move the robot to various stations to find a specific person from the database built in (3).

The overall execution of the project is summarised in the flow-chart below:

Demo

Project Website

A tutorial on launching the project with your TurtleBot is available on GitHub: http://github.com/patilnabhi/tbotnav

Project Details

- Hand-gesture recognition: Using a Kinect, OpenCV and ROS, develop an API to recognize number of fingers from 0 to 5.

- Face recognition: Using a webcam, OpenCV and ROS, develop an API to create a database of people’s faces and recognize faces in real-time.

- TurtleBot SLAM: Using TurtleBot, Kinect and ROS, implement RTAB-Map (a RGB-D SLAM approach) to navigate TurtleBot in an unknown environment.

Future work

- Object tracking: Replace AR code tracking and get TurtleBot to find specific objects in the environment.

- RTAB-Map & beyond: Explore the capabilities of RTAB-Map and RGB-D SLAM to make the navigation more robust.

- Simple is beautiful: Improve the overall execution of the project to make it more user interactive by making it simpler/easier.

This project was completed as part of the MS in Robotics (MSR) program at Northwestern University (NU).